People are remarkably accurate when doing a wide range of activities, including working with spreadsheets. Even so, the bottom line result of every spreadsheet is almost certainly wrong.

For example, if you have a spreadsheet consisting of 100 used cells and a moderate 3% probability that each cell contains an error, then the probability that the spreadsheet's results are wrong is 95%.

This article explores how and why the cascading structure of spreadsheets leads to a high error rate, and then we consider what we can do about it.

A trivial spreadsheet

Suppose that you want to know how much your sales have changed so far this year compared with the same months last year.

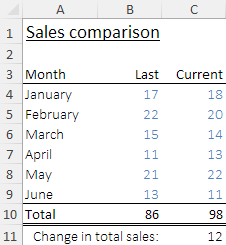

To answer this question, you build a trivial spreadsheet that collates the sales figures for January to June of the current and last years. You sum over the months of each year, and then calculate the change between the years.

A screenshot of the spreadsheet is shown to the right. Note that this example is available for download at the bottom of this page.

The spreadsheet shows that January to June sales increased from 86 last year to 98 this year, a change of plus 12. That is a good result, so you are pleased.

Unfortunately, the spreadsheet is wrong.

Before proceeding, can you see the error?

Spot the error

The problem with our trivial sales comparison spreadsheet is that the total for last year is calculated as =SUM(B4:B8). That is, the total for last year omits the June figure, while the total for this year includes all the months. If the total for last year included June too, then the change in sales would be minus 1 – a very different result.

This trivial example includes a simple error that cascades down to the bottom line result (the change in sales). The error is not obvious because the spreadsheet displays reasonable looking values in each cell. Only when we carefully check the data and the formulae does the error become apparent.

Essentially the problem here is that the formulae are hidden behind the displayed results. If the results look reasonable, then we tend to believe them.

We're 95% to 99% accurate when doing a wide range of activities

People are remarkably accurate, so it seems sensible to believe results that look reasonable. Across a wide range of non-trivial cognitive activities – such as reading a number from a chart, writing a line of computer programming code, and dialling a 10 digit number – we get things right typically between 95% and 99% of the time.

On the rare occasions when we do make an error, it is likely to be minor. Often we don't even notice that we made an error. But when we do notice an error, we usually correct it immediately. As a consequence, we tend to be very confident about our accuracy and about our ability to detect and correct the few errors that we do make.

Building a spreadsheet is no different

In a spreadsheet, the basic unit of activity is the cell. That's where we enter data or write a formula. Research has shown that when working with spreadsheet cells we are, again, between 95% and 99% accurate (after correcting errors that we notice immediately).

This means that we have a "cell error rate" (CER) of 1% to 5%. This error rate is consistent with other activities, and it seems low, so we are generally confident about the accuracy of our spreadsheets.

Beware the calculation cascade effect

But our confidence is misplaced. Given how spreadsheets work, a cell error rate of only 1% to 5% is very likely — or in larger spreadsheets, almost certain — to lead to incorrect bottom line results.

To see why this is, consider that a typical spreadsheet consists of a cascade of calculations:

- We start with some data cells containing values.

- Then we have some formulae that refer to the data cells.

- There may be further formulae cells that refer to previous formulae cells.

- Finally, we have more formulae that refer to previous cells, calculating the results we want.

If any of the data or formulae cells contains an error, then that error will cascade down to subsequent cells and through to the results. This happens because the cells are linked in a series of calculations.

The errors we make in spreadsheets are often minor, like referencing the wrong cell, typing a number incorrectly, or inserting a row without adjusting formulae that reference that range. The issue is that for a bottom line result to be correct it depends on all previous calculations being correct.

This situation is different to, for example, making an error in a document. A typographical error may change the meaning of a word or a sentence, but it is unlikely to change the meaning of the entire document because subsequent words are not linked in a cascade like they are in a spreadsheet.

Modelling the calculation cascade

Given the calculation cascade effect, how likely is it that a spreadsheet's bottom line result is correct? To find out, let's develop a simple model. We can assume that:

- The data or formula in each cell has a small probability of containing an error.

- The probability of an error in a cell is independent of all other cells.

- All cells are linked to other cells, so that errors cascade through to the bottom line results.

Of course, in building a spreadsheet the independence assumption may not be true. For example, we might write a formula in a cell and then copy it to multiple cells. Consequently, we often have groups of similar cells. But we can also make errors in copying cells, such as having an incorrect relative reference, or failing to copy to all relevant cells. Cell independence is a useful approximation for most spreadsheets, so we'll use it here as an assumption.

Given our assumptions, the probability that the bottom line results contain at least one error can be modelled as follows:

- Let's define

pto be the probability of an error in a cell (i.e. the Cell Error Rate). - Therefore, the probability that a cell does not contain an error is

1-p. - Suppose that there are

ncells used in the spreadsheet. - Let's also define

kto be the number of used cells that contain an error. - The probability that none of the used cells contains an error is

P(k = 0) = (1-p)n. - Finally, the probability that the spreadsheet contains at least one error is

P(k >= 1) = 1 - (1-p)n.

Cascade model results

For various cell error rates (0% to 5%) and number of used cells (15, 100, and 1000), the following chart shows the probability that a spreadsheet contains at least one incorrect cell.

Our sales spreadsheet example above contains 15 used cells – twelve for data and three formulae. If we have a moderate 3% probability of making an error in any cell, then the probability that the resulting change in sales calculation is wrong is 37%. That is, even with this trivial spreadsheet, there’s only a 63% probability that the result is correct.

If we have a somewhat larger, though still small, spreadsheet consisting of 100 used cells and a moderate cell error rate of 3%, then the probability that the spreadsheet's results are wrong is 95%. That is, even a small spreadsheet is almost certain to be wrong. Such errors might be minor, but they could also be catastrophic.

For a spreadsheet that has 1,000 used cells, the probability of error is essentially 100% for any cell error rate greater than a very careful 0.5%.

This is a surprising result, so it is worth contemplating for a moment. Even though we are remarkably accurate, only rarely making errors, the linked cascading structure of a spreadsheet means that it is almost certain that the results are wrong.

How many errors is my spreadsheet likely to contain?

Given our simple model of errors, we can represent the probability distribution of the number of errors using a Binomial distribution.

For example, for a cell error rate of 3% and 100 used cells, the following chart shows the probability distribution for the number of cells (k) that contain an error.

If our spreadsheet has only 100 used cells, then there are most likely 2 or 3 errors – though there is a 35% probably of there being 4 or more errors.

Note that the value for zero error cells equals the (1-p)n value that we derived previously – in this example, (1-0.03)100 = 4.8%, i.e. about 5% probability that the spreadsheet is correct (or, equivalently, a 95% probability that the spreadsheet is wrong).

Download the spreadsheet at the bottom of this page to see how these calculations are implemented, along with additional explanatory notes.

Are spreadsheet errors that common in practice?

Research shows that almost all spreadsheets contain errors. The spreadsheet error rate found by academic studies is similar to our simple error model, at about 95%. So, the short answer is yes, spreadsheet errors are that common in practice.

One leading researcher concluded that "Every study, without exception, has found error rates much higher than organizations would wish to tolerate." In other words, spreadsheet errors are a real and substantial problem.

To reduce errors: Use good practices and test your spreadsheets

Given that even a small spreadsheet is likely to contain errors, what can we do?

In creating software, programmers have learnt that they need to follow good development practices and do extensive testing. Typically, 30% to 60% of the total software development effort goes into detecting and correcting errors. Good program testing practice includes inspecting every line of code and testing modules to ensure that they behave as expected.

In contrast, spreadsheet builders typically put little or no effort into testing. Provided the spreadsheet produces a result that looks reasonable, we assume that it is correct. The outcome is, unlike computer software, spreadsheets are almost always essentially untested.

There are basically two things that we can do to reduce spreadsheet errors:

- Use good spreadsheet development practices to help reduce the occurrence of errors and make them easier to find.

- Learn from our software programming colleagues and thoroughly test our spreadsheets. That means inspecting every cell to look for errors and testing each part of the spreadsheet to ensure that it behaves as expected.

Further reading

There is an extensive academic and practitioner literature dedicated to improving the quality of spreadsheets. As a starting point for learning more, have a look at the following papers.

Spreadsheet errors:

- What we know about spreadsheet errors.

- Spreadsheet errors: What we know; what we think we can do.

- What we don't know about spreadsheet errors today: The facts, why we don't believe them, and what we need to do.

- The cognitive science of spreadsheet errors: Why thinking is bad.

Spreadsheet development good practice:

- New guidelines for writing spreadsheets.

- Spreadsheet issues: Pitfalls, best practices, and practical tips.

- Spreadsheet modelling best practice.

- A maintainability checklist for spreadsheets.

Spreadsheet testing:

- Recommended practices for spreadsheet testing.

- Development and testing of spreadsheet applications.

- Detecting and refactoring code smells in spreadsheet formulas.

Download the Sales example and error models spreadsheet

If you have any comments about this article, then please contact us.