Authors

Gregg Rothermel, Margaret Burnett, Lixin Li, Christopher DuPuis, & Andrei Sheretov

Abstract

Spreadsheet languages, which include commercial spreadsheets and various research systems, have had a substantial impact on end-user computing. Research shows, however, that spreadsheets often contain faults; thus, we would like to provide at least some of the benefits of formal testing methodologies to the creators of spreadsheets.

This article presents a testing methodology that adapts data flow adequacy criteria and coverage monitoring to the task of testing spreadsheets.

To accommodate the evaluation model used with spreadsheets, and the interactive process by which they are created, our methodology is incremental. To accommodate the users of spreadsheet languages, we provide an interface to our methodology that does not require an understanding of testing theory.

We have implemented our testing methodology in the context of the Forms/3 visual spreadsheet language.

We report on the methodology, its time and space costs, and the mapping from the testing strategy to the user interface. In an empirical study, we found that test suites created according to our methodology detected, on average, 81% of the faults in a set of faulty spreadsheets, significantly outperforming randomly generated test suites.

Sample

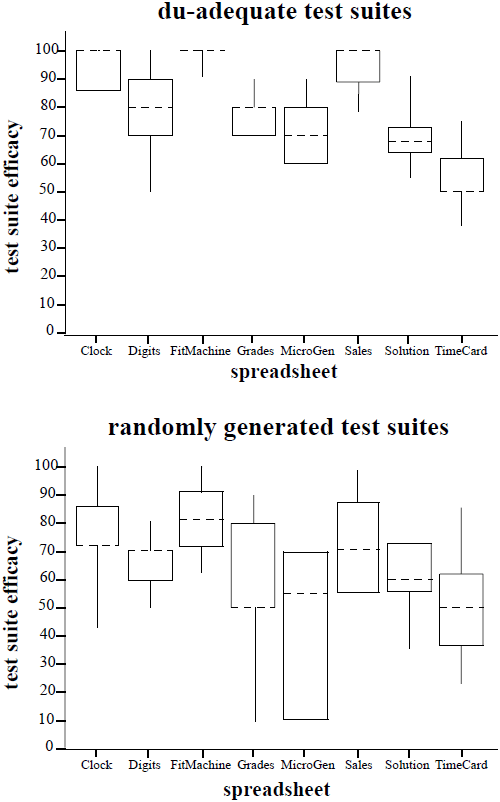

This figure contains boxplots depicting the ranges and medians of the test data.

The top graph presents results for du-adequate test suites; the bottom graph presents results for randomly generated test suites. Each graph contains eight boxplots: one for each of the eight subject spreadsheets studied.

The boxplots illustrate wider variances in the efficacies of randomly generated test suites in comparison to the efficacies of du-adequate test suites, on all spreadsheets other than Digits.

The boxplots also illustrate that the median efficacies exhibited by randomly generated test suites were lower than those exhibited by du-adequate test suites.

Publication

2001, ACM Transactions on Software Engineering and Methodology, Volume 10, Number 1, January, pages 110-147